As you’re well aware cybersecurity and appsec incidents are a regular feature in the news. I try to avoid jumping immediately on the analysis bandwagon, preferring instead to wait for a deeper understanding of what went wrong so we can think about how to avoid it in the future.

In this case I’d like to talk about the Superfish breach that was discovered on Lenovo laptops earlier this year.

In brief, Lenovo (like many if not most hardware manufacturers) chose to install software that allows them to advertise to their customers. I don’t wish to pick on them particularly in this case as the problem is rampant especially in the mobile arena. Rather lets think about Lenovo as a cautionary tale in how things can go wrong. The Superfish software installed on their laptops allows them to actually inject ads into the user stream. It did this by installing it’s own self-signed security certificate.

For those unfamiliar with the role of certificates in secure communication, certificates are supposed to certify that you are in fact who you say you are. This gives me confidence when my browser says I’m buying something from Amazon that it’s really Amazon and not some funny phishing web site trying to steal from me. I say supposed because you’re allowed to create a self-signed certificate which in essence is saying “I am who I say I am.” If this sounds scary, then you understand it.

The problem is even broader when it’s a self-signed root certificate. At this point you now have the person issuing the root certificate telling you “Everyone is who I say they are.” In other words, the certificate can convince your browser that Bob’s Criminal Bank™ is really your local bank. TNW News said “There is simply no reason for Superfish — or anyone else — to install a root certificate in this manner” and I wholeheartedly agree. TNW News again: “Either Superfish’s intent in installing one was malicious or due to sloppy development.”

Reasonable people can disagree on the tradeoff between advertising and privacy and ad-supported benefits like free email. This method of advertising however is beyond the scope of any responsible behavior. It puts users at great risk without any additional benefit. What reasonable people don’t do is put others at risk for their own benefit. Breaking security by stepping into the middle of secure transactions isn’t reasonable.

What’s interesting (or disturbing depending on your point of view) is how Lenovo responded to this problem. When researchers published the problem, the CTO of Lenovo said “We’re not trying to get into an argument with the security guys. They’re dealing with theoretical concerns. We have no insight that anything nefarious has occurred.”

Obviously I can’t tell you how much of their statement was spin and how much they really believed, I hope it was mostly the former. This is a great example of how not to have cybersecurity. It goes to the core of what we currently have so many appsec / swsec problems today. People want to simply patch up systems after a breach has occurred, rather than build fundamentally secure software using sound principles.

CSO online describes the reality quite well: “It’s a classic example of a Man-in-the-Middle attack, one that wouldn’t be too difficult to conduct based on the design of the software and its security protocols. Worse, the risk remains even after the user uninstalls the Visual Discovery software.”

The idea that a vulnerability is merely theoretical is not only ignorant but dangerous. Software exploits occur because bad actors operate by finding unexpected loopholes in a software system. Think of it this way – if you left your door unlocked is it a security issue? Or perhaps “If an unlocked door is never entered, is it really unlocked” if you’re a philosopher. One could contend that the risk is theoretical, but most of us would say that such a statement is ridiculous. (Props to those who live in an area where door security isn’t required.)

Software vulnerabilities are exactly like unlocked doors. They are not theoretical, they are actual whole or openings in your software than can and probably will be exploited. If we went to have secure applications we must grow up and stop pretending that the vulnerabilities we’re facing aren’t real. We have to move a proactive preventative engineering based approach that treats vulnerabilities as real risks, only then can we be secure.

[Update – Jeff Williams who I respect a lot as a long-term advocate of software security had a comment about this. For some reason my comment system currently seems broken, so I”m posting a link to his response on LinkedIn. I do agree that tool noise or false alarm rates are problematic in security analysis tools, but I don’t agree that this lets company representatives and developers off the hook for claiming theoretical.]

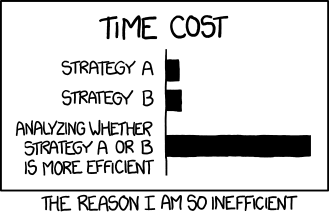

[Update – I get that there are exploits that are theoretical. My point is that the label is misused by those who are trying to avoid fixing a problem or taking responsibility. For example early Heartbleed responses were full of “it’s theoretical“, which was also true for the airline wifi attack demonstrated against United. I certainly don’t disagree that there is such a thing as a theoretical exploit, but sometimes we spend more effort trying to disprove the exploit than the effort that would be necessary to fix it.]

[Update – I wrote a follow-up discussing why people might call a vulnerability false or theoretical.]

Resources

Pingback: Unscientific AppSec Pain Poll - The Code Curmudgeon

Pingback: Why Appsec Vulnerabilities Are Dismissed as "Theoretical" or "False" -

Pingback: Why Appsec Vulnerabilities Are Dismissed as “Theoretical” or “False” – Parasoft